What is Explainable AI? A Simple Guide for Businesses and Decision Makers

.png)

Introduction to Explainable AI

Artificial intelligence is everywhere today. It suggests what we should watch, aids in loan approval, identifies fraud, advises doctors, and even helps with hiring decisions. But there's rising anxiety. Many AI systems function as a black box. They provide responses without discussing how or why they arrived at those answers.

This is where Explainable AI comes into play.

Explainable AI enables humans to understand how AI systems make judgments. It prioritizes clarity, transparency, and trust. Businesses and users can explore the thinking behind an AI output rather than accepting it at face value.

As AI use increases across industries, explainable AI is becoming more important than optional.

What is Explainable AI in Simple Terms

Explainable AI refers to artificial intelligence systems that can clearly explain their decisions in terms that people can understand.

Traditional AI models frequently produce outcomes without explaining the theory behind them. Explainable AI opens the black box and responds to inquiries like:

- Why did the AI make this decision

- Which data influenced the result

- How confident is the AI in its prediction

In simple words, Explainable AI makes AI more transparent, understandable, and trustworthy.

For example, If an AI system rejects a loan application, Explainable AI can show the factors that influenced the rejection such as income level, credit score, or repayment history.

This transparency helps both businesses and users feel confident about AI driven decisions.

Why Explainable AI is Important for Businesses

Today's businesses depend mostly on artificial intelligence for automation, forecasting, and decision-making. However, without explainability, AI may cause significant concerns.

Here is why Explainable AI is critical for modern businesses.

Builds Trust with Customers

Customers are more likely to trust AI-powered systems when decisions are properly explained. Transparency mitigates fear and confusion.

Improves Decision Making

Business executives may learn how AI generates insights and make smarter strategic decisions.

Reduces Legal and Compliance Risks

Many businesses, including healthcare, finance, and insurance, need particular explanations for automated choices. Explainable AI helps with regulatory compliance.

Identifies Bias and Errors

Explainable AI can detect biased or inaccurate information that influences outcomes.

Encourages Ethical AI Use

Explainability is the basis for responsible artificial intelligence. It ensures that artificial intelligence systems are fair, accountable, and human-centered.

The Problem with Black Box AI Models

Black box AI refers to models that provide outputs without any explanation.

While these models may be highly accurate, they raise serious concerns.

- Lack of transparency

- Difficulty in auditing decisions

- Higher risk of bias

- Reduced user trust

- Regulatory challenges

For example, If an AI system denies a medical treatment recommendation without explanation, doctors and patients cannot trust the decision.

Explainable AI solves this problem by adding visibility and accountability.

How Explainable AI Works

Explainable AI offers approaches and technologies to make AI judgments intelligible. These strategies differ according to the type of model.

Model Based Explainability

Some artificial intelligence models are automatically interpretable. These include decision trees, rule-based systems, and linear models. Their logic is simple to follow.

Post Hoc Explainability

Clarity methods are applied after an advanced model, such as deep learning, has made a decision. These strategies explain predictions without modifying the model.

Common approaches include:

- Feature importance analysis

- Visualization techniques

- Local explanations for individual predictions

Human Centered Explanations

Explainable AI also focuses on presenting explanations in a way that non technical users can understand.

- Clear language

- Visual charts

- Simple reasoning

This human friendly approach is crucial for business adoption.

Real World Examples of Explainable AI

Explainable AI is already being used across industries. Here are some practical examples.

Explainable AI in Healthcare

AI helps doctors diagnose diseases and recommend treatments. Explainable AI shows which symptoms, scans, or medical history influenced the diagnosis.

This improves doctor confidence and patient trust.

Explainable AI in Finance

Banks use AI for credit scoring and fraud detection. Explainable AI ensures loan decisions can be justified to customers and regulators.

Explainable AI in Insurance

Insurance companies use AI to assess claims. Explainable AI explains why a claim was approved or rejected.

Explainable AI in Hiring

AI driven recruitment tools can explain why a candidate was shortlisted or rejected, reducing bias and promoting fairness.

Explainable AI in E-commerce

AI recommends products based on browsing behavior. Explainable AI can show why a product was suggested, improving user engagement.

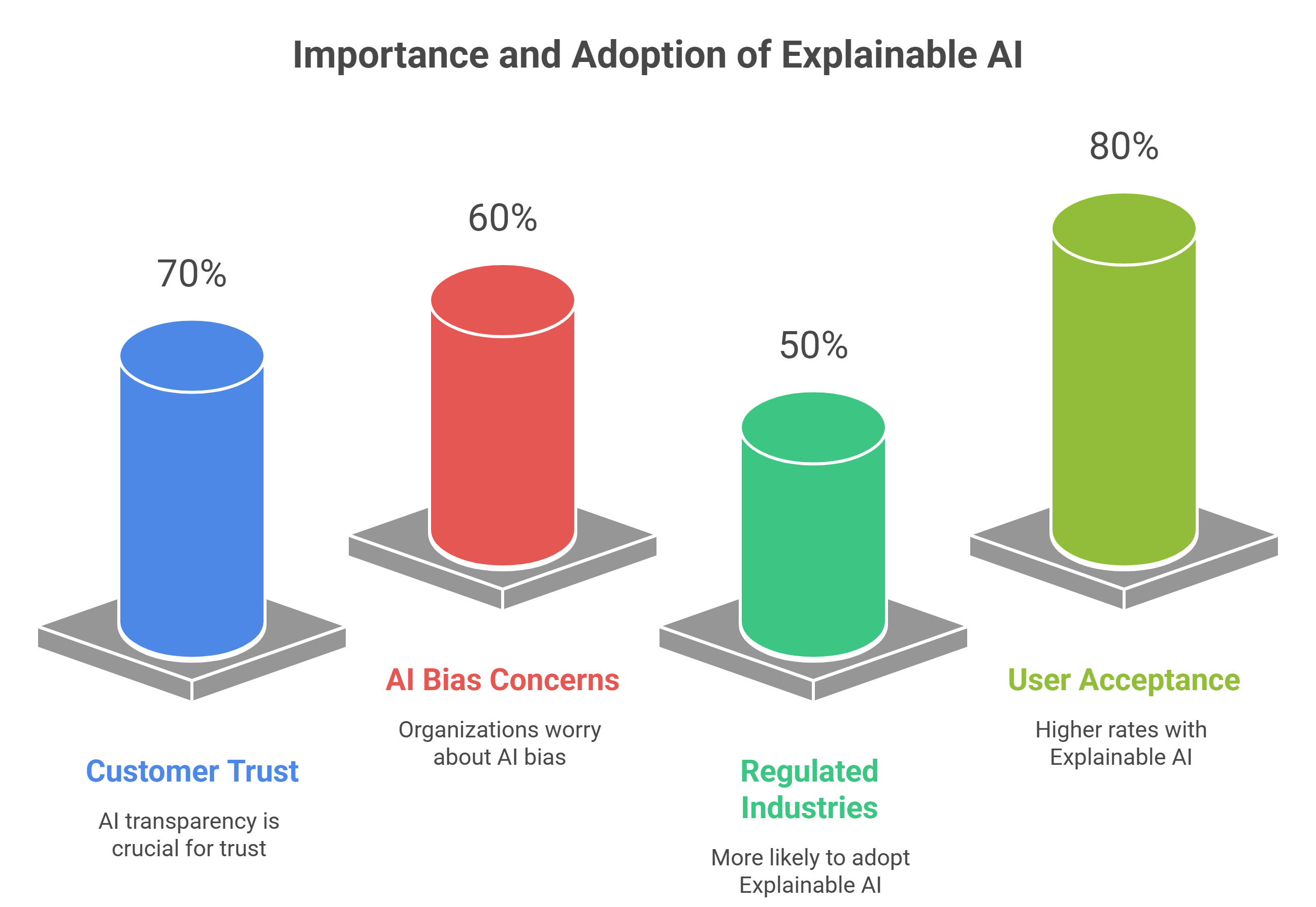

Statistics Showing the Importance of Explainable AI

Here are some key insights you can use for visuals or infographics.

These trends show that Explainable AI is becoming a business necessity.

Benefits of Explainable AI for Enterprises

Explainable AI offers both technical and business advantages.

Increased Transparency

Clear explanations improve understanding across teams.

Better Compliance

Supports legal and regulatory standards.

Faster Adoption of AI

Employees and stakeholders trust AI systems more.

Improved Model Performance

Understanding model behavior helps refine and improve accuracy.

Stronger Brand Reputation

Ethical and transparent AI builds long term credibility.

Explainable AI vs Traditional AI

Traditional AI focuses mainly on performance and accuracy. Explainable AI balances performance with understanding.

Traditional AI

- High accuracy

- Low transparency

- Limited trust

Explainable AI

- High transparency

- Better accountability

- Higher trust

Businesses today need both accuracy and explainability.

Challenges of Implementing Explainable AI

Despite its benefits, Explainable AI comes with challenges.

- Complexity of advanced models

- Tradeoff between accuracy and explainability

- Lack of standard frameworks

- Need for skilled AI professionals

However, these challenges can be addressed with the right strategy and technology partner.

Explainable AI and Ethical AI Development

Explainable AI plays a major role in ethical AI.

- It ensures fairness

- Reduces discrimination

- Promotes accountability

- Protects user rights

Ethical AI is no longer optional. Governments, customers, and investors expect responsible AI practices.

How Businesses Can Get Started with Explainable AI

Here are simple steps businesses can follow.

- Identify high impact AI use cases

- Choose explainable or interpretable models

- Use explainability tools and frameworks

- Train teams on AI transparency

- Partner with experienced AI developers

Role of Redblox Technologies in Explainable AI Development

Redblox Technologies is an AI Development Company located in Pondicherry, India, helping businesses build intelligent and transparent AI solutions.

We focus on:

- Custom AI development

- Explainable AI systems

- Ethical and responsible AI frameworks

- Industry specific AI solutions

- Scalable enterprise AI platforms

Our strategy assures that AI systems are not just effective, but also intelligible, compliant, and trustworthy.

Whether you're developing AI for healthcare, banking, retail, or enterprise automation, our team creates AI that people can trust.

Explainable AI Use Cases for Future Businesses

As AI evolves, Explainable AI will play a key role in future innovations.

- Autonomous systems

- Smart cities

- AI powered education

- Customer experience platforms

- Decision intelligence tools

Businesses that invest early in Explainable AI will gain a competitive advantage.

Final Thoughts

Explainable AI is shaping the future of Artificial Intelligence. It bridges the gap between machine intelligence and human understanding.

For businesses, it means more trust, better decisions, reduced risk, and stronger relationships with customers.

As AI continues to have an impact on essential decisions, transparency and accountability will be key to effective adoption.

Partnering with professional AI companies such as Redblox Technologies ensures that your AI systems are not just intelligent, but also responsible and future-ready.

Frequently Asked Questions (FAQs)

Is Explainable AI only for large enterprises?

No. Explainable AI is useful for startups, SMEs, and enterprises alike.

Does Explainable AI reduce AI performance?

Not necessarily. Many modern techniques balance accuracy and explainability.

Is Explainable AI mandatory?

In many regulated industries, it is becoming essential for compliance.

Can existing AI systems be made explainable?

Yes. Post hoc explainability techniques can be applied to existing models.

Fill your details below and get in touch with our domain experts

Start a conversation

+91 7550051204

contact@redblox.io

Book 1:1 Meeting with Redblox

@redblox_technologies